网页爬虫 - python3.4.1 request模块报错 ’list’ object has no attribute ’get’

问题描述

用python 写了一个 爬取ip地址的爬虫,由于该网站是反爬虫的,所以写了代理使用线程池开启10个线程来爬取ip地址然而直接报错’list’ object has no attribute ’get’不知道如何解决,贴上本人代码。from bs4 import BeautifulSoupimport requestsimport reimport timefrom multiprocessing import Poolimport pymysqlimport randomfrom threadpool import *

随机请求头def randHeader():

head_connection = [’Keep-Alive’, ’close’]head_accept = [’text/html, application/xhtml+xml, */*’]head_accept_language = [’zh-CN,fr-FR;q=0.5’, ’en-US,en;q=0.8,zh-Hans-CN;q=0.5,zh-Hans;q=0.3’]head_user_agent = [’Mozilla/5.0 (Windows NT 6.3; WOW64; Trident/7.0; rv:11.0) like Gecko’, ’Mozilla/5.0 (Windows NT 5.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/28.0.1500.95 Safari/537.36’, ’Mozilla/5.0 (Windows NT 6.1; WOW64; Trident/7.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; rv:11.0) like Gecko)’, ’Mozilla/5.0 (Windows; U; Windows NT 5.2) Gecko/2008070208 Firefox/3.0.1’, ’Mozilla/5.0 (Windows; U; Windows NT 5.1) Gecko/20070309 Firefox/2.0.0.3’, ’Mozilla/5.0 (Windows; U; Windows NT 5.1) Gecko/20070803 Firefox/1.5.0.12’, ’Opera/9.27 (Windows NT 5.2; U; zh-cn)’, ’Mozilla/5.0 (Macintosh; PPC Mac OS X; U; en) Opera 8.0’, ’Opera/8.0 (Macintosh; PPC Mac OS X; U; en)’, ’Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.12) Gecko/20080219 Firefox/2.0.0.12 Navigator/9.0.0.6’, ’Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 6.1; Win64; x64; Trident/4.0)’, ’Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 6.1; Trident/4.0)’, ’Mozilla/5.0 (compatible; MSIE 10.0; Windows NT 6.1; WOW64; Trident/6.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; InfoPath.2; .NET4.0C; .NET4.0E)’, ’Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Maxthon/4.0.6.2000 Chrome/26.0.1410.43 Safari/537.1 ’, ’Mozilla/5.0 (compatible; MSIE 10.0; Windows NT 6.1; WOW64; Trident/6.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; InfoPath.2; .NET4.0C; .NET4.0E; QQBrowser/7.3.9825.400)’, ’Mozilla/5.0 (Windows NT 6.1; WOW64; rv:21.0) Gecko/20100101 Firefox/21.0 ’, ’Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.92 Safari/537.1 LBBROWSER’, ’Mozilla/5.0 (compatible; MSIE 10.0; Windows NT 6.1; WOW64; Trident/6.0; BIDUBrowser 2.x)’, ’Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.11 (KHTML, like Gecko) Chrome/20.0.1132.11 TaoBrowser/3.0 Safari/536.11’]header = { ’Connection’: head_connection[0], ’Accept’: head_accept[0], ’Accept-Language’: head_accept_language[1], ’User-Agent’: head_user_agent[random.randrange(0, len(head_user_agent))]}return header

def randproxy():

config = { ’host’: ’127.0.0.1’, ’port’: 3306, ’user’: ’root’, ’password’: ’’, ’db’: ’autohome’, ’charset’: ’utf8’, # ’cursorclass’: pymysql.cursors.DictCursor,}# 创建连接list_ip = []connection = pymysql.connect(**config)cursor = connection.cursor()sql = ’select ip,port from can_use’try: cursor.execute(sql) results = cursor.fetchall() for row in results:data = { ’ip’: row[0], ’port’: row[1]}list_ip.append(data)except: print('error')# time.sleep(1)finally: connection.close()return random.choice(list_ip)

def download(url):

proxy = randproxy()proxy_host = 'http://' + proxy[’ip’] + ':' + proxy[’port’]proxy_temp = {'http': proxy_host}parse_url = requests.get(url[0],headers=randHeader(),timeout=12,proxies=proxy_temp)soup = BeautifulSoup(parse_url.text,’lxml’)pre_proxys = soup.find(’table’, id=’ip_list’).find_all(’tr’)for i in pre_proxys[1:]: try:td = i.find_all(’td’)id = td[1].get_text()port = td[2].get_text()# 执行sql语句config = { ’host’: ’127.0.0.1’, ’port’: 3306, ’user’: ’root’, ’password’: ’’, ’db’: ’autohome’, ’charset’: ’utf8’, ’cursorclass’: pymysql.cursors.DictCursor,}# 创建连接connection = pymysql.connect(**config)data = { ’ip’:id, ’port’:port,}with connection.cursor() as cursor: # 执行sql语句,插入记录 sql = ’INSERT INTO proxyip (ip,port) VALUES (%s,%s)’ cursor.execute(sql, (data[’ip’],data[’port’])) # 没有设置默认自动提交,需要主动提交,以保存所执行的语句connection.commit() except:print('error') # time.sleep(1) finally:connection.close()time.sleep(2)

def proxy_url_list():

url = 'http://www.xicidaili.com/wt/{}'url_list = []for i in range(1,1387): new_url = url.format(i) url_list.append(new_url)return url_list

if name =='__main__':

pool = ThreadPool(2)requests = makeRequests(download,proxy_url_list())[pool.putRequest(req) for req in requests]pool.wait()# url = 'http://www.baidu.com'# proxy = randproxy()# proxy_host = 'http://' + proxy[’ip’] + ':' + proxy[’port’]# proxy_temp = {'http': proxy_host}# test = requests.get(url,headers=randHeader(),timeout=10,proxies=proxy_temp)# soup = BeautifulSoup(test.text,’lxml’)# print(soup)图片发布不了,现在只能贴上错误提示了 File 'C:Pythonlibsite-packagesthreadpool.py', line 158, in runresult = request.callable(*request.args, **request.kwds)

File 'C:/qichezhijia/proxyspider.py', line 80, in download

parse_url = requests.get(url[0],headers=randHeader(),timeout=12,proxies=proxy_temp)

AttributeError: ’list’ object has no attribute ’get’Traceback (most recent call last): File 'C:Pythonlibsite-packagesthreadpool.py', line 158, in run

result = request.callable(*request.args, **request.kwds)

File 'C:/qichezhijia/proxyspider.py', line 80, in download

parse_url = requests.get(url[0],headers=randHeader(),timeout=12,proxies=proxy_temp)

AttributeError: ’list’ object has no attribute ’get’Traceback (most recent call last): File 'C:Pythonlibsite-packagesthreadpool.py', line 158, in run

result = request.callable(*request.args, **request.kwds)

File 'C:/qichezhijia/proxyspider.py', line 80, in download

parse_url = requests.get(url[0],headers=randHeader(),timeout=12,proxies=proxy_temp)

AttributeError: ’list’ object has no attribute ’get’Traceback (most recent call last): File 'C:Pythonlibsite-packagesthreadpool.py', line 158, in run

result = request.callable(*request.args, **request.kwds)

File 'C:/qichezhijia/proxyspider.py', line 80, in download

parse_url = requests.get(url[0],headers=randHeader(),timeout=12,proxies=proxy_temp)

AttributeError: ’list’ object has no attribute ’get’Traceback (most recent call last): File 'C:Pythonlibsite-packagesthreadpool.py', line 158, in run

result = request.callable(*request.args, **request.kwds)

File 'C:/qichezhijia/proxyspider.py', line 80, in download

parse_url = requests.get(url[0],headers=randHeader(),timeout=12,proxies=proxy_temp)

AttributeError: ’list’ object has no attribute ’get’

问题解答

回答1:makeRequests是做什么的?你是不是把requests赋值成了list类型,下面再requests.get(*)自然就出错了吧。

回答2:makerequests类似python的map函数,里面有两个参数(function,list()),以list里面的参赛供给给前面的函数进行运作……里面的requests是requests模块的方法,可能重名了吧,又或者url[0]这个写法是错误的,等下回去调试一下……

回答3:重名了,建議這一行

requests = makeRequests(download,proxy_url_list())[pool.putRequest(req) for req in requests]

先改成

myrequests = makeRequests(download,proxy_url_list())[pool.putRequest(req) for req in myrequests]

再試試

相关文章:

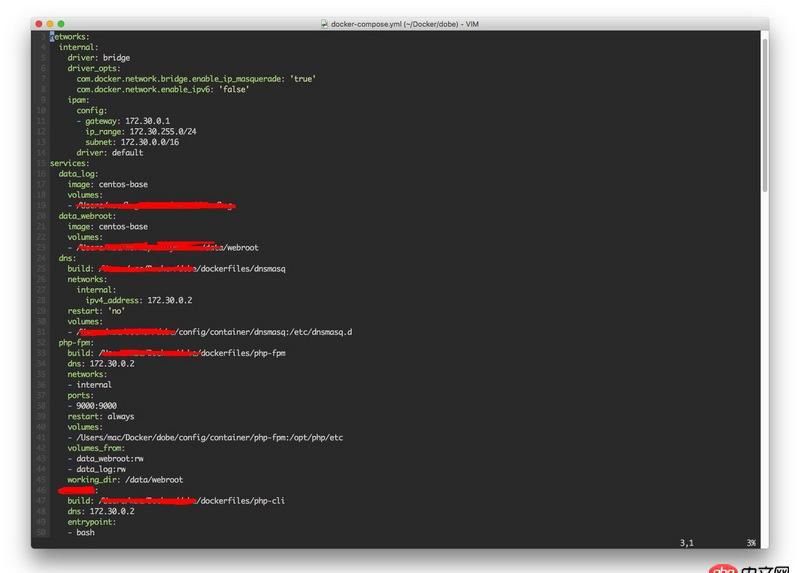

1. docker网络端口映射,没有方便点的操作方法么?2. dockerfile - [docker build image失败- npm install]3. docker gitlab 如何git clone?4. docker-compose中volumes的问题5. boot2docker无法启动6. docker api 开发的端口怎么获取?7. dockerfile - 我用docker build的时候出现下边问题 麻烦帮我看一下8. 关docker hub上有些镜像的tag被标记““This image has vulnerabilities””9. docker不显示端口映射呢?10. mysql - phpmyadmin怎么分段导出数据啊?

网公网安备

网公网安备